Technology today is accelerating so fast it’s hard to comprehend. In the latest step towards a future that’s looking more AI controlled every second, a study at Stanford University found that a computer algorithm could correctly guess whether a person is straight or gay highly accurately.

This machine intelligence can tell whether a man is straight or gay correctly 81% of the time, and 74% of the time for women. It can’t work as a perfect determinant of sexual orientation as a whole, but it has made it more apparent, in case it wasn’t already, that a person’s sexual orientation is not a choice but something you’re born with. It also worked a lot more accurately than with human judges, who only achieved 61% for men and 54% for women.

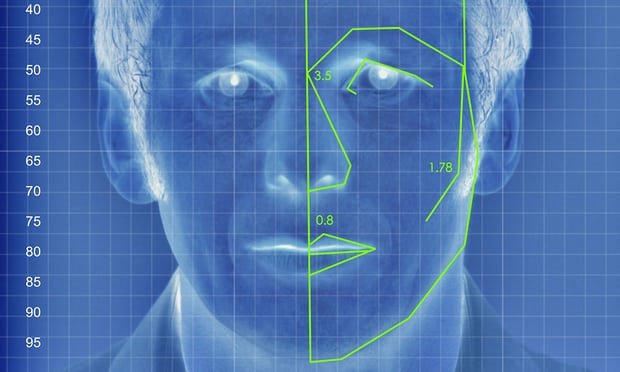

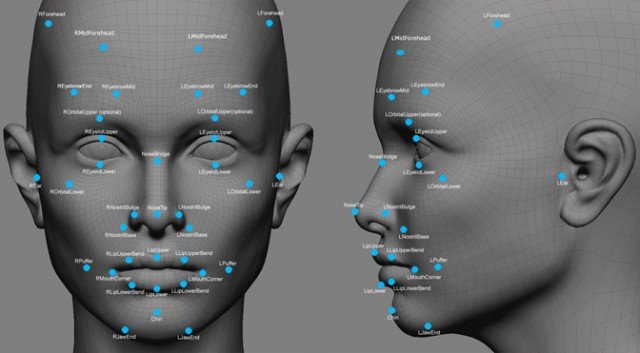

For the study, which was published in the Journal of Personality and Social Psychology and first reported in the Economist, researchers Michal Kosinski and Yilun Wang (who believe faces contain much more information about sexual orientation than can be perceived and interpreted by the human brain) used 35,326 facial images that men and women posted publicly on a US dating website. They used deep neural networks to extract features from these images, which were then entered into a mathematical system designed to analyse visuals.

The research also cropped up some unexpected physical distinguishers, such as that gay men had narrower jaws, longer noses and larger foreheads than straight men, and that gay women had larger jaws and smaller foreheads compared to straight women.

As illuminating as this is regarding the human condition, the implications of this form of artificial intelligence are far reaching and morally dubious, to put it mildly. For one, there’s billions of facial images of people stored on social media sites and in government databases. These could be used to detect people’s sexual orientation without their consent.

All the more alarming is that if this technology fell into the wrong hands, it could have disastrous consequences. Governments that still prosecute LGBT people could hypothetically use the technology to conduct mass ‘cleansings’. Considering these rather grim possibilities, it’s easy to see why people think that even creating this technology is controversial. However, according to Nick Rule, an associate professor of psychology at the University of Toronto, it is important to develop and test this technology for a very hard-hitting reason.

“What the authors have done here is to make a very bold statement about how powerful this can be. … Now we know that we need protections.”